Evaluating Video Models as Simulators of Multi-Person Pedestrian Trajectories

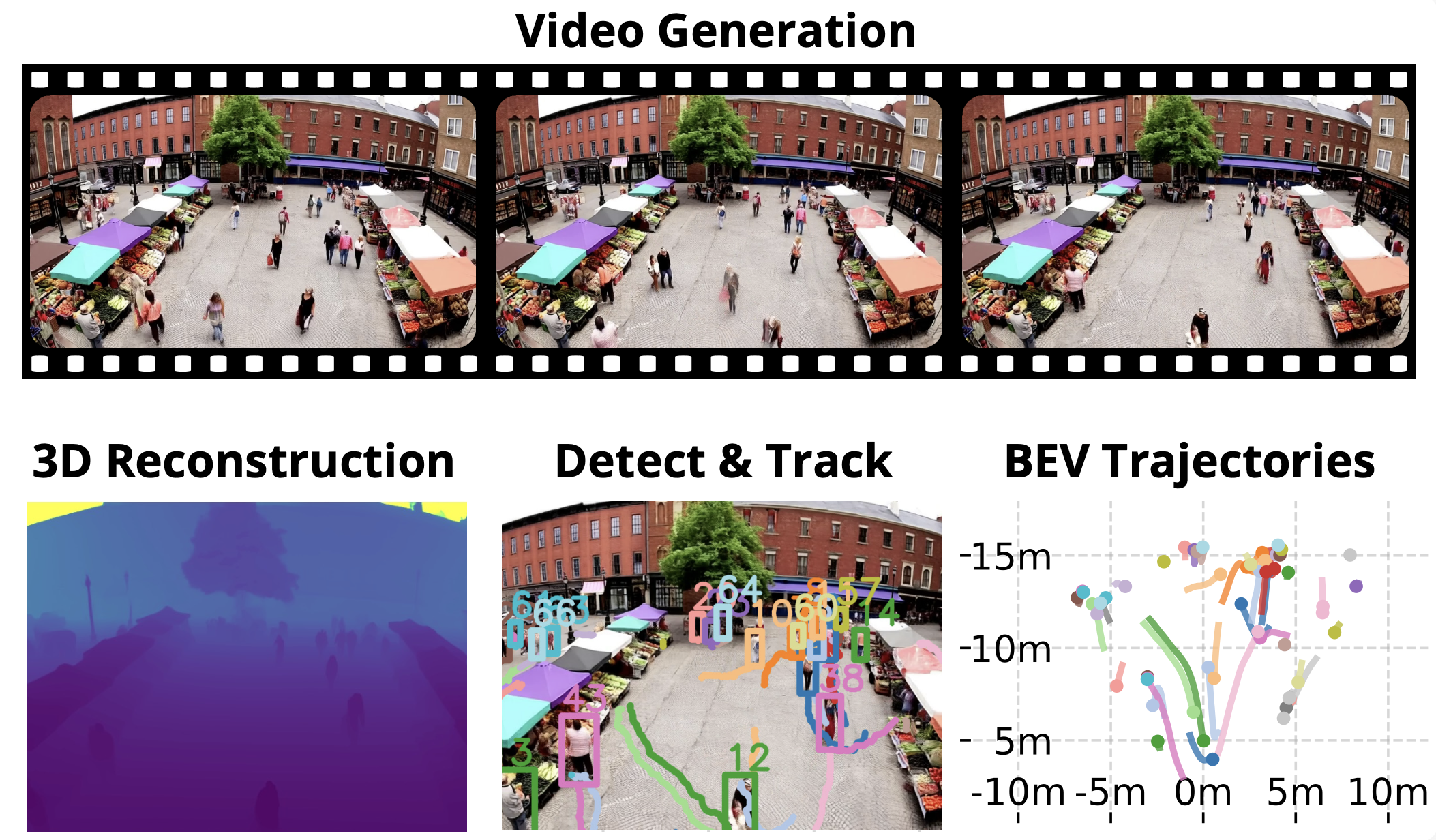

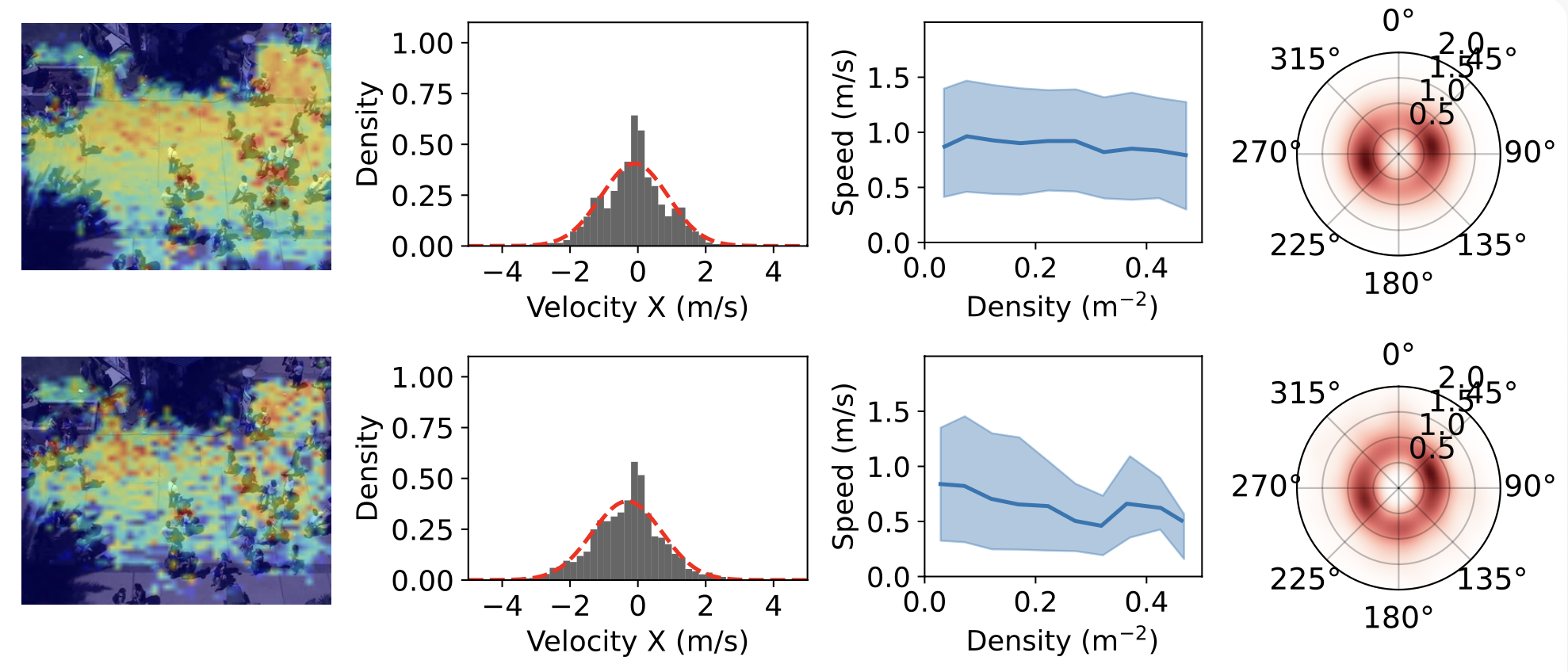

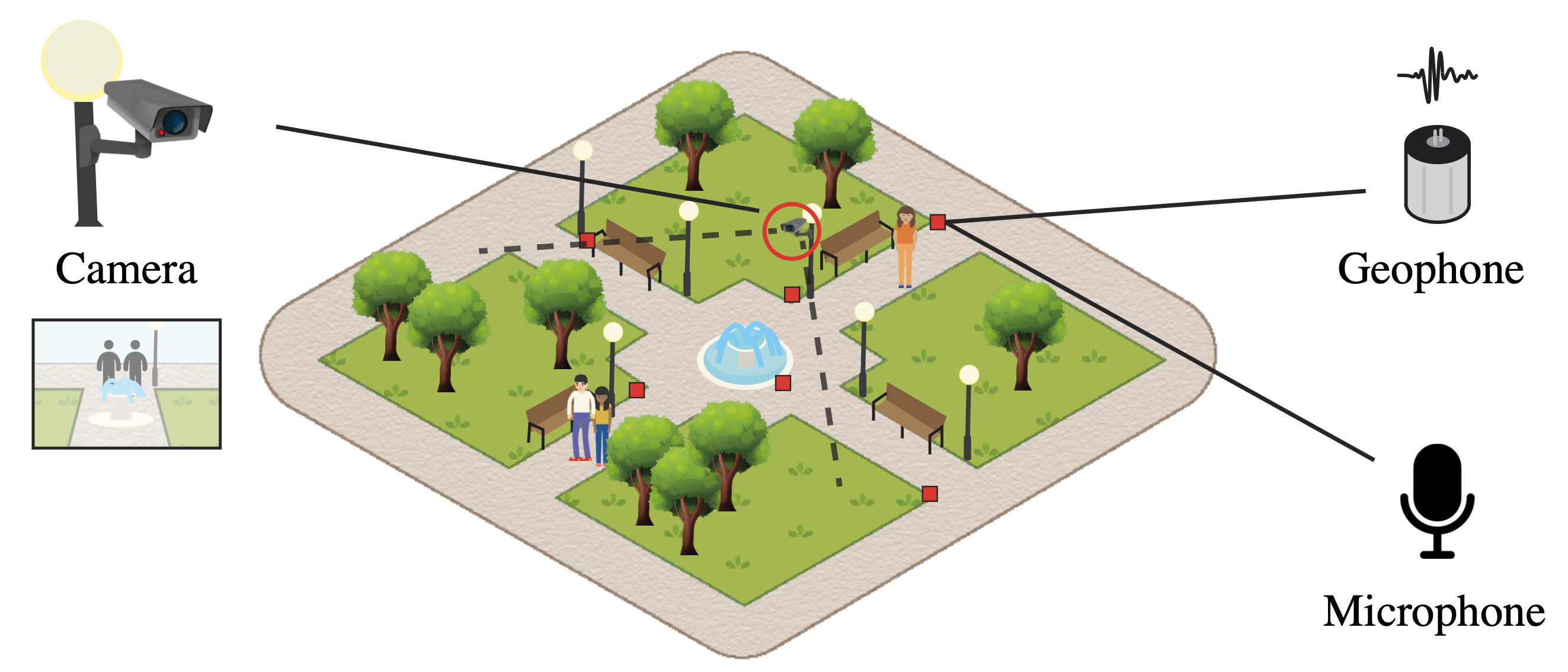

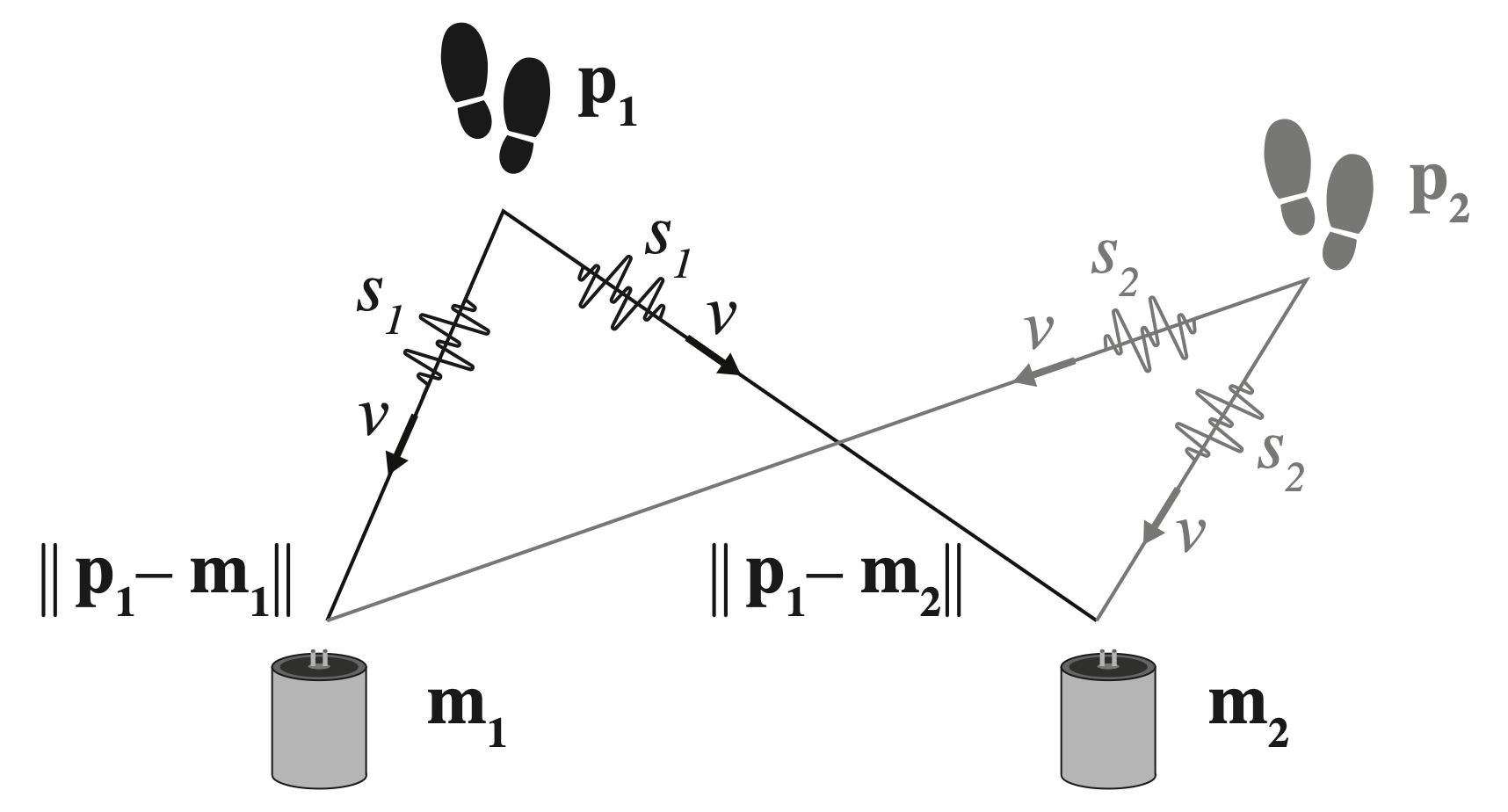

We propose an evaluation protocol to benchmark text-to-video (T2V) and image-to-video (I2V) models as implicit simulators of pedestrian dynamics. We use 3D reconstruction and depth estimation to extract pedestrian trajectories without known camera parameters.